The internet of things: It can help us manage our energy use even when we’re away from home, it can help you let people into your house remotely or make receiving packages easier. It can help you monitor your own family, home security issues or grocery use.. and it can help others monitor you.

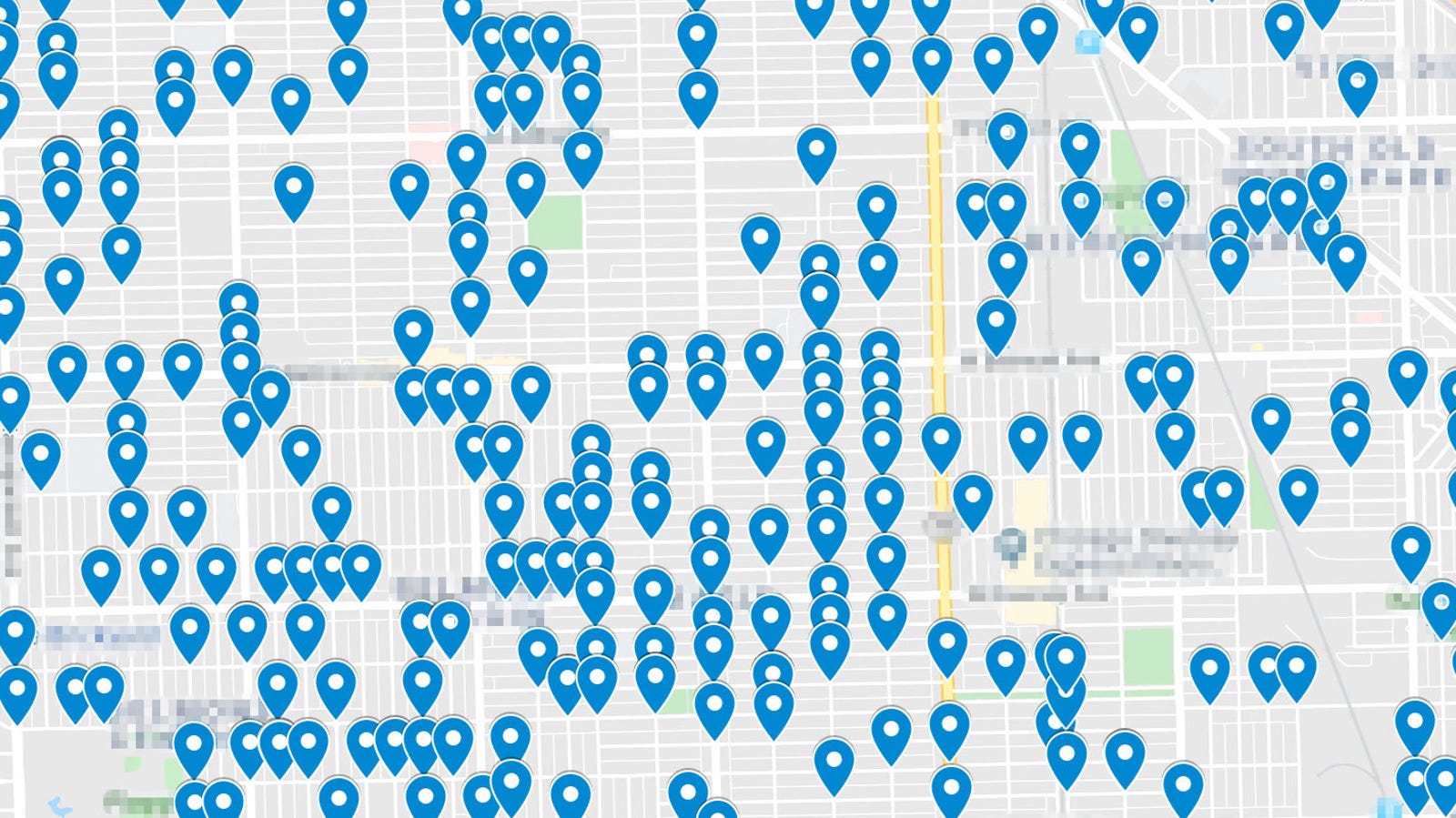

A recent Gizmodo investigation, for example, revealed that Amazon’s smart doorbell/home security system Ring had major security vulnerabilities even despite a company pledge to protect user privacy. Gizmodo was able to uncover the locations of thousands of ring devices within a random Washington DC area. While only the Ring users who chose to use the Neighbors app were revealed, this still represents a major vulnerability which is ripe for exploitation.