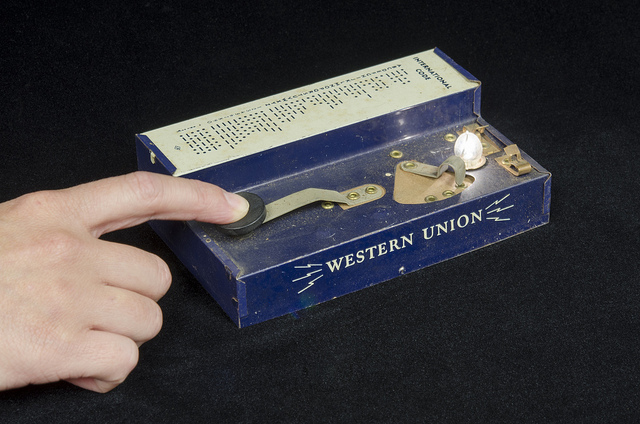

Considered by James Carey to be the first medium of electronic communication, the telegraph was a revolutionary development, since it was the first communication medium to separate time and space for the purposes of communication. Before the invention of the telegraph, message speed was bound to how fast a messenger could travel: by foot, horse, or railroad. After the invention of the telegraph, messages could travel faster than a messenger ever could. This development thus had ripple effects on markets, democratic participation and community.

Fast forward to the first decade of this century, and suddenly we see a new separation beginning to emerge. Prior to the popular use of the internet and social and participatory media, our consumption of news was tied to specific times and spaces: The living room, the kitchen, the six o’clock news hour, mornings over breakfast. We devoted time, mental space, and physical space to the consumption of news in various forms, and those spaces and times structured our information consumption habits, needs and values. We relied on human producers and editors to prioritize information for us because we simply did not have the space or time for infinite news consumption.

But then came Facebook, Twitter, Web 2.0 and the 24/7 digital cable universe. Now we live in an environment where the separation of time and space has been reduced even further. We have the potential to be nearly constantly exposed to news from anywhere in the world. Our rituals for consuming this information are breaking down, or changing, and the ways in which this information is presented to us is also changing. Our consumption habits, for example have necessitated the presentation of information in short sound bites, “pivoting to video”, 280 characters or less, image-heavy, and entertaining.

Unfortunately though, despite the fact that it seems as though infinite information is available, we still cannot process, handle or analyze infinite information. Thus we require filters. Some we create ourselves, by carefully curating our friends lists or news feeds. Most are created for us: algorithms filter our Facebook timelines and Google searches, for example. The problem with these filters though, in contrast to a human producer or editor, is that they are opaque. Hard to see, and harder to understand. Even the people who program the algorithms do not always know what any individual’s algorithm will filter out or let through. It’s a chaotic system, taking information from dozens of different cues or inputs. Because we can’t know precisely how these decisions are made, it is difficult to hold them accountable when we have a problem like fake news. It is also difficult for us to find online spaces that offer us the mental white space or distance we need to think carefully about big issues.

We need to decide if this new compression of time and space is supporting our communities or undermining them. We need to decide whether it’s prudent to hold organizations and governments accountable for public access to good information. We need to decide how and when to build new rituals around information consumption- and articulate a vision of what these new rituals should look like. But we can’t do any of these things if we don’t first teach ourselves, and others how to look at algorithms and the technologies we use with a critical eye.

Excellent article, Jaigris!

Thank you!